Is AutoML Dead?

Or is it just resting?

“LLMs can write code, so you can fire your data scientists.”

Oh, wait…

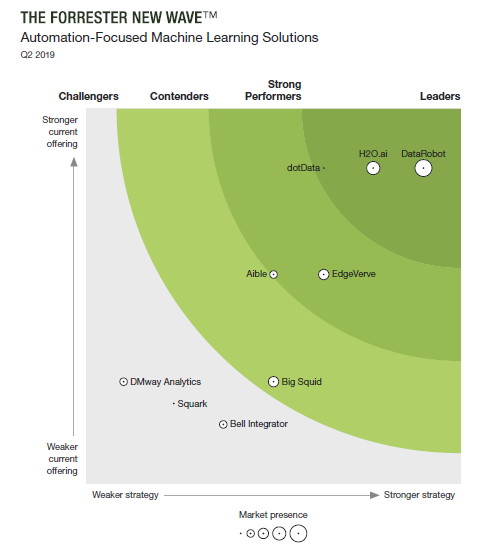

AutoML hype peaked in 2019. On May 28, 2019, Forrester published The Forrester New Wave: Automation-Focused Machine Learning Solutions. The report covered DataRobot, H2O.ai, and a bunch of no-hopers, including Aible, Bell Integrator, Big Squid, DMway, dotData, EdgeVerve, and Squark.

Where are they now? Seven years after Forrester evaluated “automation-focused machine learning solutions”, where are those companies now?

DataRobot focuses heavily on MLOps and “governance” with AI agents. That impresses Gartner, and the company has steadily improved its position in the Magic Quadrant for Data Science and Machine Learning Platforms. Perhaps that will be enough to get someone to buy the company.

H2O.ai offers numerous software products that aren’t a coherent suite. (For example, Driverless AI and H2O-3 have completely different architectures.) Gartner isn’t at all impressed with this.

Neither company has any hope of raising money or going public.

As to the rest:

Aible isn’t dead yet, just muddling along.

Bell Integrator sold out to Noventiq, a Cyprus-based company set up to avoid sanctions on Russia. A skeleton crew maintains the company’s TinyML tool.

Qlik acquired Big Squid in 2021 and rebranded it as Qlik Predict

DMway ceased operations in 2022. A moment of silence, please.

dotData now positions itself as a “feature factory”.

EdgeVerve is the rebranded corpse of Skytree Software, acquired by Infosys. The company now features agentic AI, of course.

Market Holdings, a bottom-feeder, acquired Squark and repositioned it to target nonprofits

Not exactly a stable of winners.

Forrester’s Mike Gualtieri was the first industry analyst to treat AutoML as a software category. He was also the last to do so, because the category was already dead. H2O and DataRobot already knew: standalone AutoML was a bridge to nowhere.

Models don’t drive value if you can’t deploy them.

H2O messed around with AWS Lambda, then partnered with Parallel M. That arrangement lasted three months, until DataRobot bought Parallel M. DataRobot treated the deal as an acquihire, since the software needed a lot of work, but it was worth it just to fuck with H2O.

Nisha Talagala at Parallel M coined the term “MLOps.” By design, MLOps is a chokepoint for applied machine learning; enterprises need a single control point for compliance and governance. Your MLOps solution must support all of your models, not just those built with AutoML.

When DataRobot and H2O ventured into MLOps, they were no longer competing purely as AutoML vendors; they were competing as general-purpose data science platforms, a different game entirely.

Completeness is the key to success for data science platforms. You must support the process from soup to nuts, from data to prediction, with tools for a range of user personas, and with enterprise security and governance.

Established data science vendors rapidly added AutoML features. When Forrester published its report in May, 2019, Dataiku, Google Cloud Platform, Microsoft Azure, RapidMiner, SAS, and KNIME had already done so. AWS and IBM followed soon thereafter.

The quality of those features varied widely. Google AutoML was pretty good, Azure AutoML was meh, and Dataiku’s was shit. SageMaker AutoPilot was surprisingly slow when first released; it used a clunky optimization algorithm that ran trivial experiments.

Not that it mattered. If your data science team standardized on Microsoft Azure, nobody cared if Azure AutoML was any good or not. If you wanted to use DataRobot or H2O, you would have to break the workflow, move data around, and port the model back to Azure Machine Learning for deployment. Few people were willing to do that.

DataRobot and H2O knew they would be outflanked. H2O built adorably named sidecars for Driverless AI. DataRobot bought failed startups and tied Engineering in knots integrating that mess. Top executives brayed about the future, presided over flat sales, and lined their pockets.

Forrester did not cover open source AutoML in its report. Analysts rarely cover open source software; there’s no payola. Anyway, you don’t need Forrester or Gartner to tell you if a FOSS package is any good; you download it and check it out yourself.

There were only a few open-source AutoML packages available in May 2019. Auto-WEKA, available since 2013, was a very good tool for the three people who used WEKA. TPOT, short for Tree-Based Pipeline Optimization, had limited functionality. H2O AutoML, a component of the H2O-3 open source distribution, did not do feature engineering. Auto-sklearn ran on top of scikit-learn, which made it attractive for a large audience of data scientists.

All four packages had tiny developer teams. TPOT was literally a one-man show. Auto-WEKA and auto-sklearn pushed updates annually. H2O.ai supported H2O AutoML, but the company steered most of its effort to the commercially licensed Driverless AI.

Open-source AutoML exploded after Forrester published its report. AWS Labs released AutoGluon the following December, and Microsoft Research released FLAML in 2020. Unlike their predecessors, these were serious projects backed by vendors with big budgets. With committed developers and a regular release schedule, AutoGluon and FLAML quickly displaced the older open source AutoML projects.

Moreover, they started to displace commercial AutoML software. When AutoGluon was a top choice for Kaggle competitors, customers started to wonder what they were getting for their DataRobot or H2O license fees.

They weren’t getting better models. If DataRobot or Driverless AI built better models than AutoGluon, the vendors would have published benchmarks.

Today, commercial AutoML software is dead. Open-source AutoML software, on the other hand, is thriving.

When ChatGPT launched, McKinsey MBAs rubbed their hands with glee. LLMs can write code. Data scientists write code. Ergo, we can fire all the data scientists, or at least most of them.

Not so fast, cowboy. There’s this small problem of context. True, LLMs know how to write Python syntax. But your out-of-the-box LLM doesn’t know anything about your data schema, your business problem, your runtime environment, your compliance rules, or a host of other things that data scientists need to know before they write a line of code. For an LLM to write useful data science code, a user must feed it that information.

That’s a lot harder than asking ChatGPT to write your term paper.

But that’s not all. Even if you feed all the required context to an LLM, it will spit out the code for one model. That’s nice. But data science is inherently iterative; until you run that model and validate it, you won’t know if it’s any good.

AutoML packages build and test many models to find the one that works best. If you want your LLM to do data science, you will sit there all week, feeding in results and waiting for it to spit out the next experiment.

No problem, said Microsoft. With FLAML’s built-in zero-shot modeling, you can one-shot a model. No more iteration! LLMs can do data science! “Zero-shot modeling” is Microsoft-speak for “we ran experiments and developed some decision rules so FLAML doesn’t start optimization from scratch.”

You can one-shot a model with FLAML if you’re willing to settle for models that suck.

“AI Agents can do iterative stuff, so we don’t need AutoML anymore.”

Oh, wait…

Sure, you can automate predictive modeling with AI agents. Use agents to provide an LLM with all the context it needs to build and run predictive models, capture test results, find an optimal model, and produce human-friendly artifacts so your stakeholders can review and approve the model.

Or you can run AutoGluon and be done with it.

Using AI agents to recreate AutoML is like training a dog to wait on tables.

Table waiting dogs would be a real draw for me to visit a restaurant at least once--just to see it done. That's about all i've got for llm automl too. I'm eagerly awaiting the OpenClaw Data Science headline that puts someone out of business. The best thing about human data scientists is that, while on average not very skilled or insightful, they can't work around the clock or while you sleep -- their stuff usually doesn't fuck up your business until you put the model in production and that still happens so rarely as to be a nominal risk. For safety's sake hire human data scientists but don't let them use openclaw. ;-)

As a DS who'se first job (2015-2019 at SparkBeyond - which did do Feature engineering, thankyouverymuch) - neat summary!

I hadn't thought about the mlOps part; our issues were more the clients actually managing to access their data and targets.